A root object that holds a tail of cacheable objects. More...

#include <elementary/memcore/CacheQueue.hpp>

Classes | |

| struct | Iterator |

| iterator class More... | |

Public Member Functions | |

| bool | adjust (const CacheBase &theCache, memsize_t newCost, CacheQueue &TemporarilyUnreleasable, CacheQueue &PermanentlyUnreleasable) |

| Try to adjust the cache by releasing objects from the queue until the new cost is achieved. | |

| memsize_t | compute_memsize () const |

| Internal Debugging Function which computes the memsize by inspecting and summing up each object's contribution. | |

| int | iterate (Iterator &It) const |

| iterate over all elements here | |

| void | list_info (int Verbosity=50) const |

| Show debug info. | |

| memsize_t | memsize () const override |

| The CacheQueue object will return the size occupied by all cached objects. | |

| int | nEntries () const |

| Count the number of entries here. | |

| memsize_t | purge () |

| Remove all objects from the Cache. | |

| void | push_front (const WeakPtr< Cacheable > &Ca) |

| Add Cacheable object to the front of the queue. | |

| void | put_in_front (const WeakPtr< Cacheable > &Ca) |

| Ensure the given object is in the front of the queue even if it is already contained somewhere else. | |

| ~CacheQueue () | |

| Destructor. | |

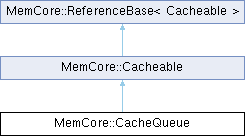

| Public Member Functions inherited from MemCore::Cacheable | |

| Cacheable (const WeakPtr< Creature > &C) | |

| Constructor. | |

| virtual void | DeferredConstructor () |

| A virtual function that is called on the first strong referencing of a Cacheable object. | |

| bool | isCached () const |

| Check whether this object is managed by a cache queue. | |

| bool | isUncached () const |

| Check whether this object is managed by a cache queue. | |

| bool | markAsUsed () |

| Mark this data object as being recently used. | |

| WeakPtr< CacheBase > | myCache () const |

| Return the associated Cache object as known from the associated Creator, if any. | |

| const WeakPtr< Creature > & | myCreator () const |

| Return the associated Creator, if any. | |

| int | NumberOfListEntries () const |

| Count the number of entries here. | |

| void | PreDestructor () |

| This class uses a predestructor to remove itself's size from the cache queue. | |

| void | push_back (CacheQueue &Q) |

| Put the current item onto the BACK of the given cache queue. | |

| void | push_front (CacheQueue &Q) |

| Put the current item onto the FRONT of the given cache queue. | |

| void | setCreator (const WeakPtr< Creature > &C) |

| Set and the creator. | |

| Public Member Functions inherited from MemCore::ReferenceBase< Cacheable > | |

| auto | getObjectCountID () const noexcept |

| Get a unique ID for this object in the given domain. | |

| bool | isIdentical (const WeakPtr< Cacheable, Cacheable > &PossibleSelf) const noexcept |

| Check if this object is identical to the one used by the given pointer. | |

| void | mkAutoDestructive () |

| Marks this object as being automatically destructed, e.g. | |

| refcount_t | refcount () const noexcept |

| The strong reference count. | |

| ReferenceBase (Cacheable *that) noexcept | |

| Constructor, initializes reference counter to zero. | |

| const auto & | self () const |

| Return weak pointer to the object self. | |

| refcount_t | wrefcount () const noexcept |

| The weak reference count. | |

Additional Inherited Members | |

| Public Types inherited from MemCore::Cacheable | |

| using | cachecounter_t = unsigned long |

| The type to store repeated accesses to a cached object. | |

| Public Types inherited from MemCore::ReferenceBase< Cacheable > | |

| using | reference_domain_t |

| The type of the base class. | |

| using | SelfPtr_t |

| Type for a pointer to this object itself. | |

| Protected Member Functions inherited from MemCore::Cacheable | |

| void | adjustCacheableSize (memsize_t memDiff) |

| In case this object changes its size after insertion to the CacheQueue, call this function to adjust. | |

| ~Cacheable () | |

| Destructor. | |

| Protected Member Functions inherited from MemCore::ReferenceBase< Cacheable > | |

| virtual void | extremeUnction () |

| A virtual function that will be called just before the object is destroyed. | |

| ReferenceBase & | operator= (const ReferenceBase &R) |

| Protected assignment operator (should not be called). | |

| void | suicide () |

| Delete this. | |

| virtual | ~ReferenceBase () |

| Virtual destructor. | |

Detailed Description

A root object that holds a tail of cacheable objects.

Intrusive, thread-aware cache queue with fine-grained locking.

This class implements a doubly-linked intrusive list used for cache management. Each queue is itself a Cacheable node acting as the sentinel element. The design uses two levels of locking:

- A queue-level recursive mutex (CacheQueue::myMutex) protects queue-wide invariants such as:

- membership changes (push_front / push_back)

- queue statistics (CurrentMemsize, CurrentEntries)

- ordering operations

- A node-level mutex (Cacheable::mtx) protects node-local invariants:

- prev / next pointer updates

- myQueue pointer

- unlink / relink operations

This separation allows fine-grained concurrency:

- Threads operating on different nodes do not block each other.

- Queue operations serialize only when necessary.

- Node operations remain safe even when the node is not in a queue.

The queue mutex is recursive because a node may be moved within the same queue, causing the queue to be locked twice in the same call path. This avoids deadlocks without requiring global lock ordering.

Performance Considerations

The two-mutex design is intentionally chosen for performance:

- Low contention: Node-level operations (unlink, relink, inspection) do not require locking the entire queue.

- Parallelism: Multiple threads can manipulate different nodes concurrently without serializing on a single global lock.

- Locality: Pointer updates are protected by the node�s own mutex, minimizing the critical section and reducing cache-line bouncing.

- Scalability: Queue-level operations (e.g., LRU updates) do not block unrelated node operations, improving throughput under load.

This pattern is commonly used in high-performance caches and intrusive container libraries. For background and similar designs, see:

- Fine-Grained Locking (Herlihy & Shavit, The Art of Multiprocessor Programming)

- Facebook Folly intrusive containers: https://github.com/facebook/folly

- Linux kernels list.h and RCU-based list manipulation

Together, these choices provide a good balance of safety, performance, and flexibility for cache structures that must support concurrent access and node migration between queues.

Member Function Documentation

◆ adjust()

| bool MemCore::CacheQueue::adjust | ( | const CacheBase & | theCache, |

| memsize_t | newCost, | ||

| CacheQueue & | TemporarilyUnreleasable, | ||

| CacheQueue & | PermanentlyUnreleasable ) |

Try to adjust the cache by releasing objects from the queue until the new cost is achieved.

- Parameters

-

theCache The Cache management object newCost The memory occupation of the object that is supposed to be inserted soon. TemporarilyUnreleasable A CacheQueue where objects will be put that appear to be temporarily unreleasable. PermanentlyUnreleasable A CacheQueue for objects that seem to be unreleasable at all.

References adjust(), compute_memsize(), MemCore::CacheBase::ElementDescription, list_info(), MemCore::CacheBase::maxCost(), MemCore::Cacheable::memsize(), memsize(), MemCore::VerboseStream::MemString(), MemCore::Cacheable::myCreator(), nEntries(), MemCore::Cacheable::NumberOfListEntries(), push_front(), Wizt::ReferenceBase< Object >::self(), MemCore::DynPtr< Object, ObjectBase >::Speak(), MemCore::CacheBase::totalCost(), and MemCore::Cache::verbosity().

Referenced by adjust().

◆ compute_memsize()

| memsize_t MemCore::CacheQueue::compute_memsize | ( | ) | const |

Internal Debugging Function which computes the memsize by inspecting and summing up each object's contribution.

The CacheQueue object will return the size occupied by all cached objects.

This function will be slow as compared to memsize(), and is only intended to be used occasionally for verifying that memsize() does return the correct value. How it actually should work is cacheable objects adding and subtracting themselves from the cachequeue overall memory size.

References compute_memsize(), and MemCore::Cacheable::memsize().

Referenced by adjust(), compute_memsize(), and purge().

◆ nEntries()

| int MemCore::CacheQueue::nEntries | ( | ) | const |

Count the number of entries here.

Never call this function, it is maximally slow.

References nEntries(), and MemCore::Cacheable::NumberOfListEntries().

Referenced by adjust(), and nEntries().

Generated on for The Vish Visualization Shell by